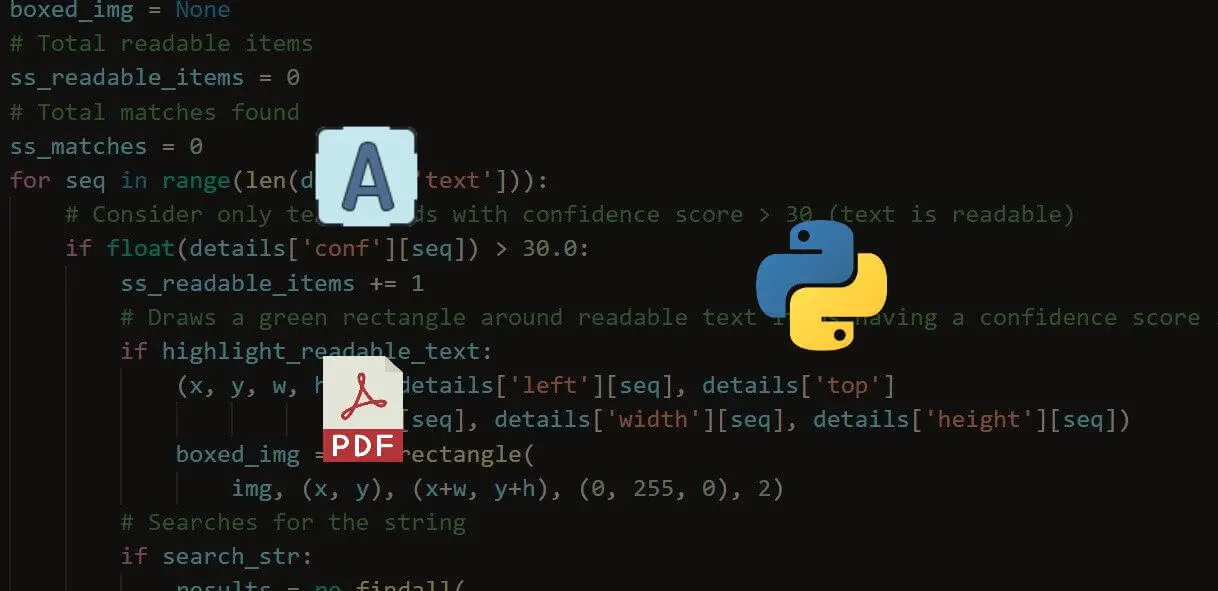

punctuations = ',','] #We initialize the stopwords variable, which is a list of words like "The," "I," "and," etc. tokens = word_tokenize(text) #We'll create a new list that contains punctuation we wish to clean. Step 3: Convert text into keywords #The word_tokenize() function will break our text phrases into individual words. #Now, we will clean our text variable and return it as a list of keywords. It likely contains a lot of spaces, possibly junk such as '\n,' etc. Type print(text) to see what it contains. #PDF EXTRACT TEXT PYTHON PDF#else: text = textract.process(fileurl, method='tesseract', language='eng') #Now we have a text variable that contains all the text derived from our PDF file.

if text != "": text = text #If the above returns as False, we run the OCR library textract to #convert scanned/image based PDF files into text. It's done because PyPDF2 cannot read scanned files.

while count < num_pages: pageObj = pdfReader.getPage(count) count =1 text = pageObj.extractText() #This if statement exists to check if the above library returned words.

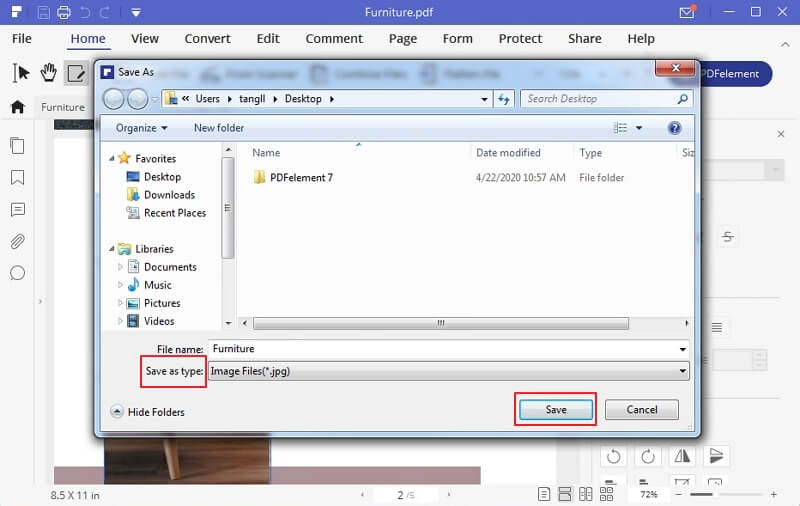

num_pages = pdfReader.numPages count = 0 text = "" #The while loop will read each page. pdfReader = PyPDF2.PdfFileReader(pdfFileObj) #Discerning the number of pages will allow us to parse through all the pages. pdfFileObj = open(filename,'rb') #The pdfReader variable is a readable object that will be parsed. filename = ' enter the name of the file here' #open allows you to read the file. Step 1: Import all libraries import PyPDF2 import textract from nltk.tokenize import word_tokenize from rpus import stopwords Step 2: Read PDF file #Write a for-loop to open many files (leave a comment if you'd like to learn how). Start up your favorite editor and type: Note: All lines starting with # are comments. In order to do this, make sure your PDF file is stored within the folder where you’re writing your script. #PDF EXTRACT TEXT PYTHON DOWNLOAD#This will download the libraries you require to parse PDF documents and extract keywords. #PDF EXTRACT TEXT PYTHON INSTALL#NLTK (to clean and convert phrases into keywords)Įach of these libraries can be installed with the following commands inside terminal (on macOS): pip install PyPDF2 pip install textract pip install nltk.textract (to convert non-trivial, scanned PDF files into text readable by Python).PyPDF2 (to convert simple, text-based PDF files into text readable by Python).You will require the following Python libraries in order to follow this tutorial: You can use any version you like (as long as it supports the relevant libraries). Product.page_number=6 product.text()='Natural Dates, 500g\nHeba / Sky Light / Sapphire' price.text()='9895\n120.For this tutorial, I’ll be using Python 3.6.3. Product.page_number=6 product.text()='Laitue Butterhead, \nField Good' price.text()='2495\n35.00' Product.page_number=6 product.text()='Tomato Salad / Italian Plum, 1kg\nEsprit Vert' price.text()='11995\n165.00' Price = prices.vertically_in_line_with(product).above(product) "in line" - we can modify the x0,x1 coords directly to use a larger The "in line" filters have a capped tolerance which is too smallįor some products in this catalog as the price is not always directly #PDF EXTRACT TEXT PYTHON FREE#This means you have to bring in more complicated OCR or ML approaches that are far from 99 or 100% accurate.įeel free to PM me if you have any more questions!Įach price is "above" the description and nearly always "aligned" in a "column" from py_pdf_parser.loaders import load_file This is because once you start to work with a wide variety PDFs that aren’t as straight forward as just text in a document, you introduce a scholastic element to the problem. Unfortunately, there is no one Python module that is going to extract PDF text 100% of the time correctly. I’ve spent a long time going over open source solutions to this and the best two I’d say are Excalibur and Apache Tika. #PDF EXTRACT TEXT PYTHON CODE#While I unfortunately cannot share the code I used to extract this text, I will tell you that for what I think your doing, the best solution will require a few things. It is especially tricky once you get a wide variety of PDFs (including PDFs with image based text or tables). Hey, I’ve spent quite a bit of time looking at extracting text as accurately as possibly from PDFs, it’s turns out that it is not as simple as it might seem.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed